How Aries 6 PCIe Smart Gearbox enables hyperscalers to scale I/O for AI workloads while preserving qualified PCIe 5 frontend NIC infrastructure

AI Infrastructure Is Outgrowing Yesterday’s I/O Architecture

AI training and inference workloads don’t just demand faster GPUs—they demand faster systems. As model sizes and datasets explode, every critical path matters: moving data into accelerators, synchronizing across nodes, and pushing results back into the network fabric.

That’s why the industry is racing to PCIe® 6.0, which doubles the signaling rate to 64.0 GT/s—double the previous generation. Two innovations make this possible: PAM4 modulation doubles throughput by encoding two bits per signal instead of one, and FLIT mode with integrated error correction maintains data reliability at these unprecedented speeds.

But there’s a catch.

The I/O Architecture Tradeoff

Modern AI platforms face a fundamental tradeoff: lanes and physical space versus performance and bandwidth per lane. PCIe 6.0 dramatically improves bandwidth density—delivering 64 GT/s per lane compared to PCIe 5.0’s 32 GT/s. This means you can achieve the same aggregate bandwidth using half the lanes, freeing precious CPU I/O for additional GPUs, accelerators, or storage. In lane-constrained server designs where every x16 slot matters, this efficiency is critical. But this advantage only materializes when both ends of the connection support PCIe 6.0. Connect a Gen6 CPU to Gen5 endpoints, and you’re forced back to Gen5 lane efficiency—consuming twice the physical lanes to deliver the same bandwidth.

The NIC Challenge: It’s Not “Just a Card”

For hyperscalers, front-end NICs—the adapters connecting servers to the data center network fabric—aren’t commodity components you swap on a whim. These are platform-level assets: custom hardware, meticulously tuned firmware, integrated telemetry hooks, and operational workflows refined over years and validated at fleet scale.

Even as hyperscalers push hosts and accelerators to PCIe 6.0 aggressively, the NIC ecosystem often stays on PCIe 5.0 longer because it’s deeply woven into how the platform is built, tested, and operated.

This creates a painful reality: you want PCIe 6.0 speed now, but you can’t afford to disrupt a networking stack that’s already qualified and deployed across thousands of servers.

The Hidden Cost of Backward Compatibility

PCIe is backward compatible, which sounds great—until you understand what that actually means.

Yes, a PCIe 6 host will connect to a PCIe 5 NIC. No, you don’t get full Gen6 link capability—specifically, you lose the bandwidth efficiency that PCIe 6 was designed to deliver.

When a PCIe 6 root complex connects directly to a PCIe 5 endpoint, the link negotiates down and operates at PCIe 5 data rates. This means:

- Bandwidth is capped at Gen5 levels (32 GT/s per lane), even though your platform is Gen6-capable

- Lane efficiency is halved —to match Gen6 aggregate bandwidth, you need twice as many physical lanes running at Gen5 speeds

- CPU I/O budget is constrained—lanes that could serve additional GPUs or accelerators are instead consumed delivering Gen5 performance

You’ve deployed Gen6 hosts and accelerators, but your CPU↔NIC connectivity remains anchored to Gen5 bandwidth density—right where AI infrastructure increasingly demands more headroom.

When a PCIe 6 root complex connects directly to a PCIe 5 endpoint, the link operates at the PCIe 5 data rate—capping your max PCIe link bandwidth at Gen5 levels, even though your platform is Gen6-capable.

You’ve deployed Gen6 hosts and accelerators, but your CPU↔NIC connectivity remains anchored to Gen5 behavior—right where AI infrastructure increasingly demands more headroom.

The Lane-Budget Problem

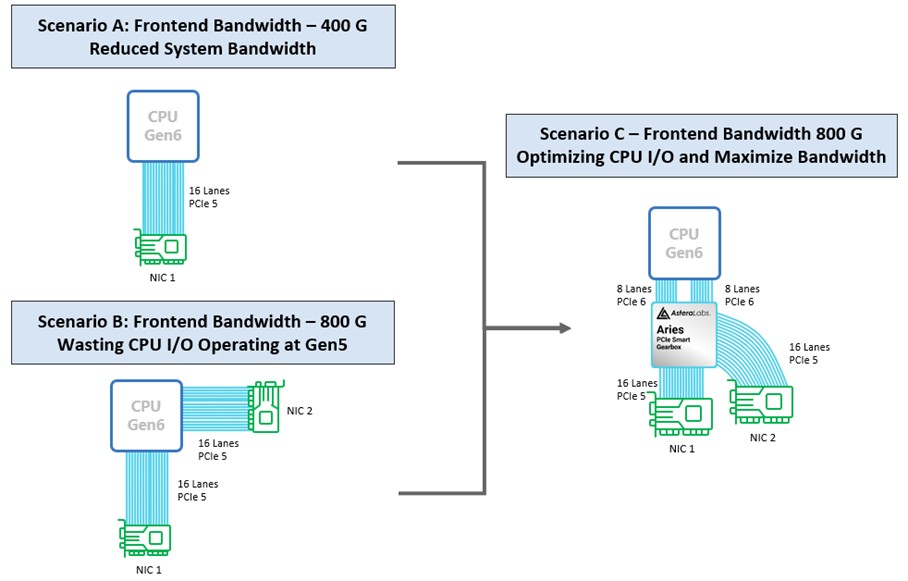

Let’s examine three common deployment patterns and their tradeoffs.

Scenario A: Reduced Bandwidth (Direct Attach, Single NIC)

- Topology: Gen6-capable CPU → Single NIC using PCIe 5 x16 (direct connection)

- What happens: The link trains and runs at Gen5 because the NIC is Gen5.

- The pain: Your connection is capped at Gen5 max PCIe link bandwidth, even though the host platform supports Gen6. You’re leaving performance on the table.

Scenario B: Wasted CPU I/O (Direct Attach, Dual NICs)

- Topology: CPU → NIC 1 (PCIe 5 x16) + CPU → NIC 2 (PCIe 5 x16), both direct

- What happens: You increase total networking capability by adding a second NIC.

- The pain: You consume two x16 CPU lanes for networking alone. That’s a massive chunk of your platform I/O budget—bandwidth capacity that could otherwise go to accelerators, storage, or other critical endpoints. In lane-constrained designs, this limits your architectural flexibility.

Scenario C: Optimized I/O Using Aries PCIe Smart Gearbox

- Topology:

- CPU → Aries PCIe Smart Gearbox using two PCIe 6 x8 upstream connections

- Aries → Two NICs, each using PCIe 5 x16 downstream connections

- What happens: You leverage Gen6 lane efficiency upstream while preserving the existing Gen5 NIC interface downstream.

- The win: Support two Gen5 NICs for aggregate throughput scaling—without consuming two full x16 Gen5 links directly from the CPU.

How Aries PCIe Smart Gearbox Changes the Game

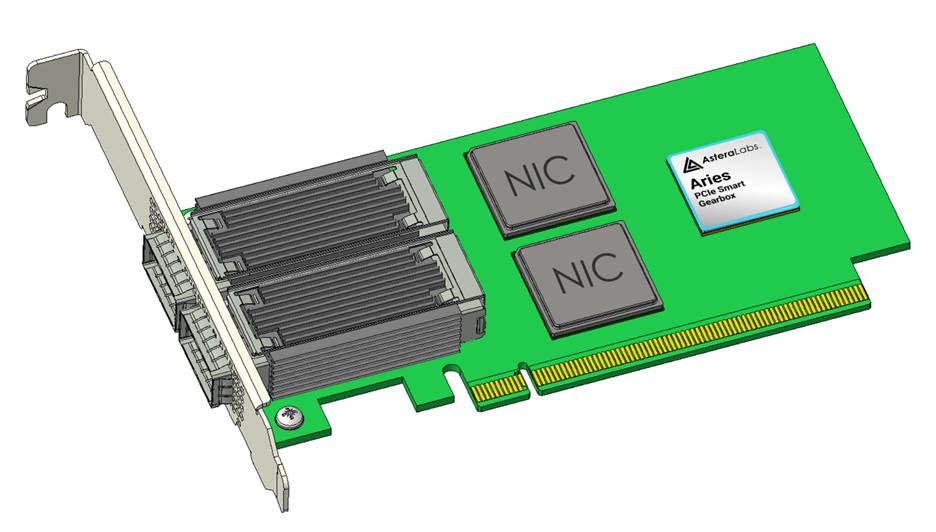

Aries PCIe Smart Gearbox functions as an intelligent PCIe bridge that terminates PCIe links and efficiently forwards transactions between an upstream Gen6 domain and downstream Gen5 domains.

What It Does in This Deployment:

Upstream connectivity:

- Two independent PCIe 6 x8 links from the CPU into Aries

- At 64 GT/s per lane, each x8 link delivers bandwidth comparable to a Gen5 x16 connection*

Downstream connectivity:

- Two independent PCIe 5 x16 links from Aries to two NICs

- At 32 GT/s per lane, each NIC retains its familiar Gen5 x16 interface—no changes required

Why This Matters:

This architecture lets a Gen6 platform support two Gen5 NICs without wiring two full x16 Gen5 links directly to the CPU—preserving precious CPU lanes for other critical functions while scaling aggregate network throughput to meet Gen6-era platform demands.

Why This Matters Now: Vera Rubin and the Next Wave of AI Platforms

The “bridge, don’t replace” value proposition becomes even more critical in next-generation platform architectures like NVIDIA’s Vera Rubin ecosystem, where lanes and physical space are at a premium.

We couldn’t have said it better when SemiAnlaysis wrote, “For most hyperscalers’ deployments, the BlueField-4 module will be replaced with their in-house frontend networking module or simply with a CX-9 which is cheaper.”1

Aries 6 Gearbox enables dual-NIC scenarios by bridging PCIe 6 x8 connections to PCIe 5 x16 NIC connections—meeting custom Vera Rubin deployment needs while preserving throughput and working within tight platform constraints.

Move Fast on PCIe 6—Without Breaking the Networking Stack

AI is compressing infrastructure upgrade timelines. Hyperscalers need PCIe 6 hosts and accelerators online quickly to maximize throughput, but they also need to keep proven NIC infrastructure stable and operational.

Aries PCIe Smart Gearbox bridges that reality:

✅ Preserves the network infrastructure built on PCIe 5 NIC connectivity—no hardware or firmware disruption

✅ Enables efficient PCIe 6 lane utilization upstream—conserving precious CPU I/O bandwidth

✅ Closes the capability gap that occurs when PCIe 6 platforms pair directly with PCIe 5 endpoints

✅ Accelerates time to deployment without forcing disruptive NIC replacement cycles

The bottom line: Deploy PCIe 6 where it matters most, preserve what’s already qualified, and bridge the gap intelligently.

Ready to Scale I/O Without Disrupting Your Fleet?

Learn more about how Aries PCIe Smart Gearbox can help your organization navigate the PCIe 6 transition while maintaining operational continuity.

Contact us to discuss your platform architecture.

1 Wega Chu, Dylan Patel, Daniel Nishball, et al., “Vera Rubin – Extreme Co-Design: An Evolution from Grace Blackwell Oberon,” SemiAnalysis, February 25, 2026, https://newsletter.semianalysis.com/p/vera-rubin-extreme-co-design-an-evolution